Designing AI-assisted pipelines across pre-production, real-time production, and post-production.

My work in AI focuses on building and integrating computational systems into existing production workflows, prioritizing reliability, spatial coherence, and collaboration over novelty. Every experiment I build is evaluated against production constraints: latency, controllability, repeatability, and integration with existing CG and VP pipelines.

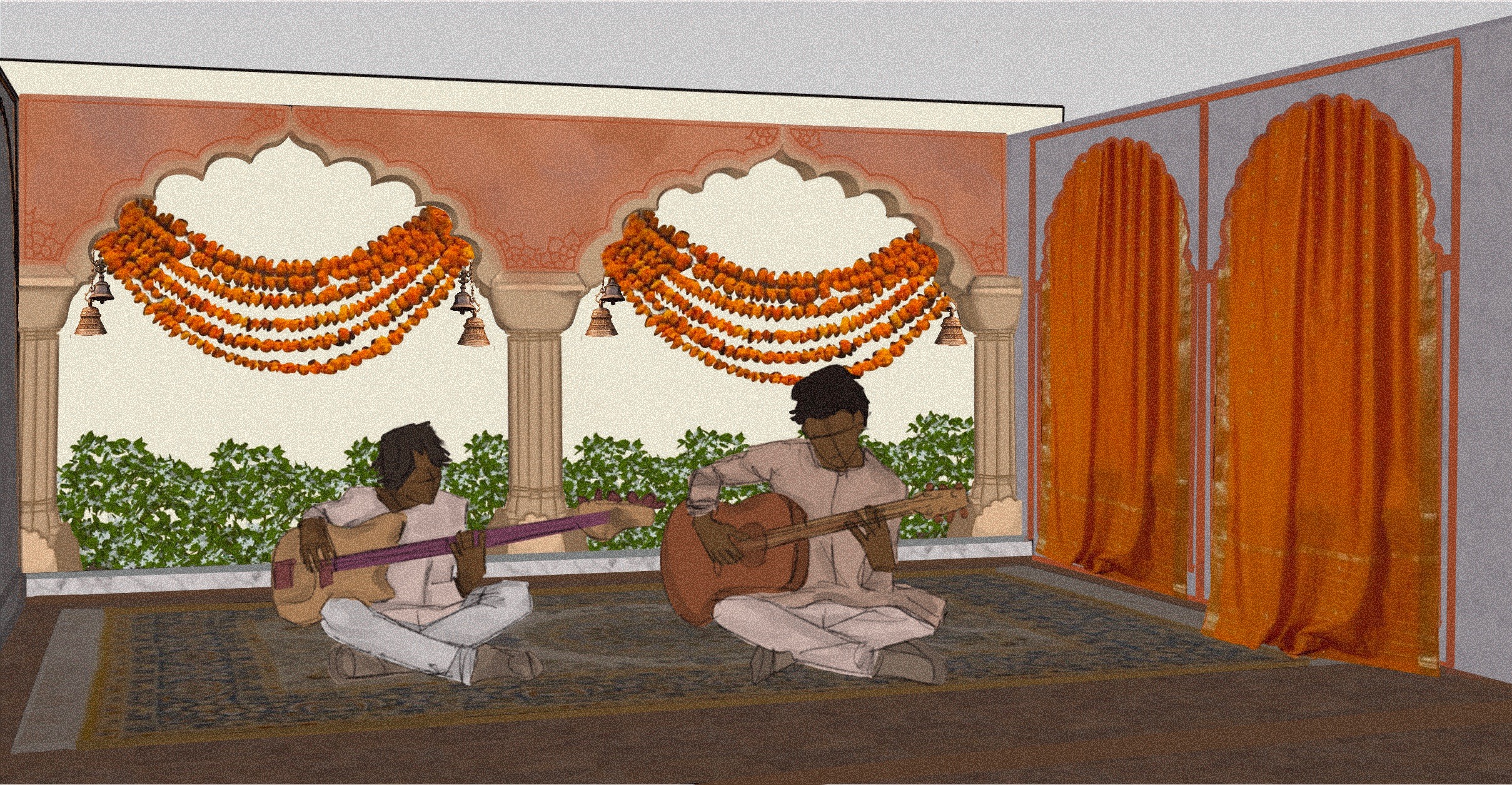

Directing and actively building an AI-assisted virtual production pipeline aimed at improving previs and shot planning efficiency using limited real-world reference data. The system integrates Unreal Engine, LiDAR/photogrammetry, and AI-assisted Gaussian splat reconstruction (via tools such as World Labs) to convert small sets of stylized location imagery into metrically grounded, navigable previs environments. This workflow supports early-stage camera blocking, environment validation, and creative alignment across departments when traditional scouting or full photogrammetry is impractical.

Independent research into AI style transfer and consistency using custom-trained LoRAs and ComfyUI pipelines to understand limitations of AI outputs in production contexts.

Designed a scroll-driven image-sequence system for real-time visual storytelling on the web, optimized for performance, preloading, and perceptual smoothness.